Hi, this was the first coherent thing you wrote in this thread that’s worth responding to, so here goes.

I think you misunderstand entropy and it’s relationship to complexity. I’ll try to explain with a simple example, and it needs to be thought about in terms of information complexity.

Imagine you start with a glass of water and some concentrated ink, initially separate from each other. The amount of information required to describe these things is small. There is some water, uniformly distributed, and there is some ink, also uniformly distributed.

Now, you pour the ink into the water. Entropy, being as entropy does, the ink ends up evenly distributed in the water. The result is inky-water, uniformly distributed, that requires slightly less information to describe than did the separated water and ink. That is, we now just have some quantity of ink water, and we also know that it’s massively improbable that the ink will spontaneously separate from the water by chance. That’s just how entropy is.

Nothing too special here so far I hope.

What’s most interesting though, is how much information it takes to describe the state of the system while the ink was only partially disolved. It is in fact massively more complex than either the initial or final state of the system.

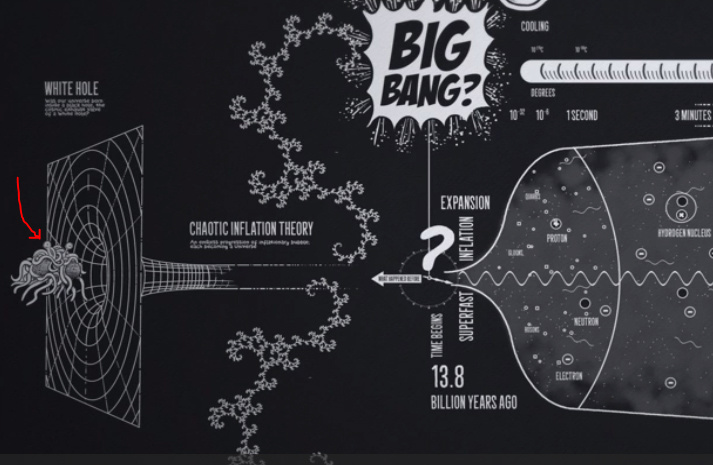

As it turns out, this is true of all such systems. The emergent complexity that we see all around us is the transient complexity inherent in the progression of entropy.

Eventually, it all inevitably goes away.

Entropy giveth and entropy taketh away.

If you want to understand how order emerges from this complexity amidst entropy, then you need to go read about Complex Systems Theory. Maybe start with Chaos Theory. The big clue in this is to look for recurring cycles in amongst the complexity. They’re like islands of cyclic stability (think order). Some of them reinforce the cyclic stability of others, and so larger islands of cyclic stability happen. Some of them catalyze the creation of more islands of stability like themselves. Those that do so spread. Those that do not, don’t.

It’s a short leap from there to evolution - the decidedly non-random selection from random changes in a complex system, selected on the basis of what survives and replicates.

Such processes can easily be observed in the wild, in structured experiment, or in simulation. If fact, one common approach to machine learning is the Genetic Algorithm - a simulation of evolution that actually works to find answers to real world complex problems like face recognition.

Finally, I’d like to point out that the process of evolution relies as much or more on cooperation as it does on competition. Survival of the fittest is just as likely to mean survival of those that work together well as the opposite. It does not stand in opposition to community, good will and cooperation between people or cultures. It fosters them.